readingTimeMinutes: 5

From “one ai agent” to an AI operations stack

Most teams start the same way: a single agentic system connected to a few docs, a handful of FAQs, and maybe a support inbox for exceptions. It works—until it doesn’t. The moment you need the bot to answer with confidence, trigger real actions, or pull metrics from internal systems, the “one bot” model turns into a patchwork of scripts, prompt tweaks, and manual escalations.

Cere Insight 2.0 is built for the next stage: an AI operations platform where knowledge, orchestration, agentic builders, and analytics live together under one governance model. In this post you’ll learn how Cere Insight 2.0 ties these capabilities into a cohesive system—and how to roll it out progressively so you can ship value quickly without losing control.

The problem: AI initiatives fail at the seams

Teams rarely struggle with generating text. They struggle with everything around it: keeping answers grounded in the right sources, ensuring the right users see the right information, turning intent into actions across tools, and proving outcomes. When systems are siloed, the seams become failure points:

- Knowledge drift: answers aren’t linked to authoritative content, so users lose trust after a few wrong or outdated responses.

- Action gap: the bot can explain a process but can’t do the process—creating tickets, updating records, or routing requests.

- Data bottlenecks: leadership asks “what changed this week?” and the AI can’t access governed metrics, or it responds with unverified guesses.

- Fragmented handoffs: conversations happen in one place, escalations somewhere else, and there’s no consistent audit trail.

- Governance and scale issues: what works for one team breaks when multiple organizations, roles, and data boundaries are involved.

Cere Insight 2.0 is designed to reduce these seam failures by treating AI as a platform capability—grounded knowledge, orchestrated workflows, agentic flows, analytics jobs, and human handoff—built with multi-tenant governance from day one.

How Cere Insight 2.0 approaches it

1) Knowledge base for grounded answers

When users ask “How do I request access?” or “What’s our refund policy?”, they don’t want a creative response—they want the correct one. Cere Insight’s knowledge base and RAG pipeline are designed to produce answers grounded in your organization’s content, reducing hallucinations and making responses explainable. This becomes the foundation layer for every other capability: workflows can reference knowledge, agents can retrieve context, and support teams can rely on consistent information.

2) Workflow orchestration across modules for multi-step automation

Real work is rarely a single response. It’s multi-step: collect missing details, validate a policy, create a record, notify stakeholders, and confirm completion. Cere Insight’s workflow orchestration coordinates these steps across modules so you can automate processes reliably. Orchestration turns conversational intent into repeatable operations—without relying on one giant prompt to “figure everything out.”

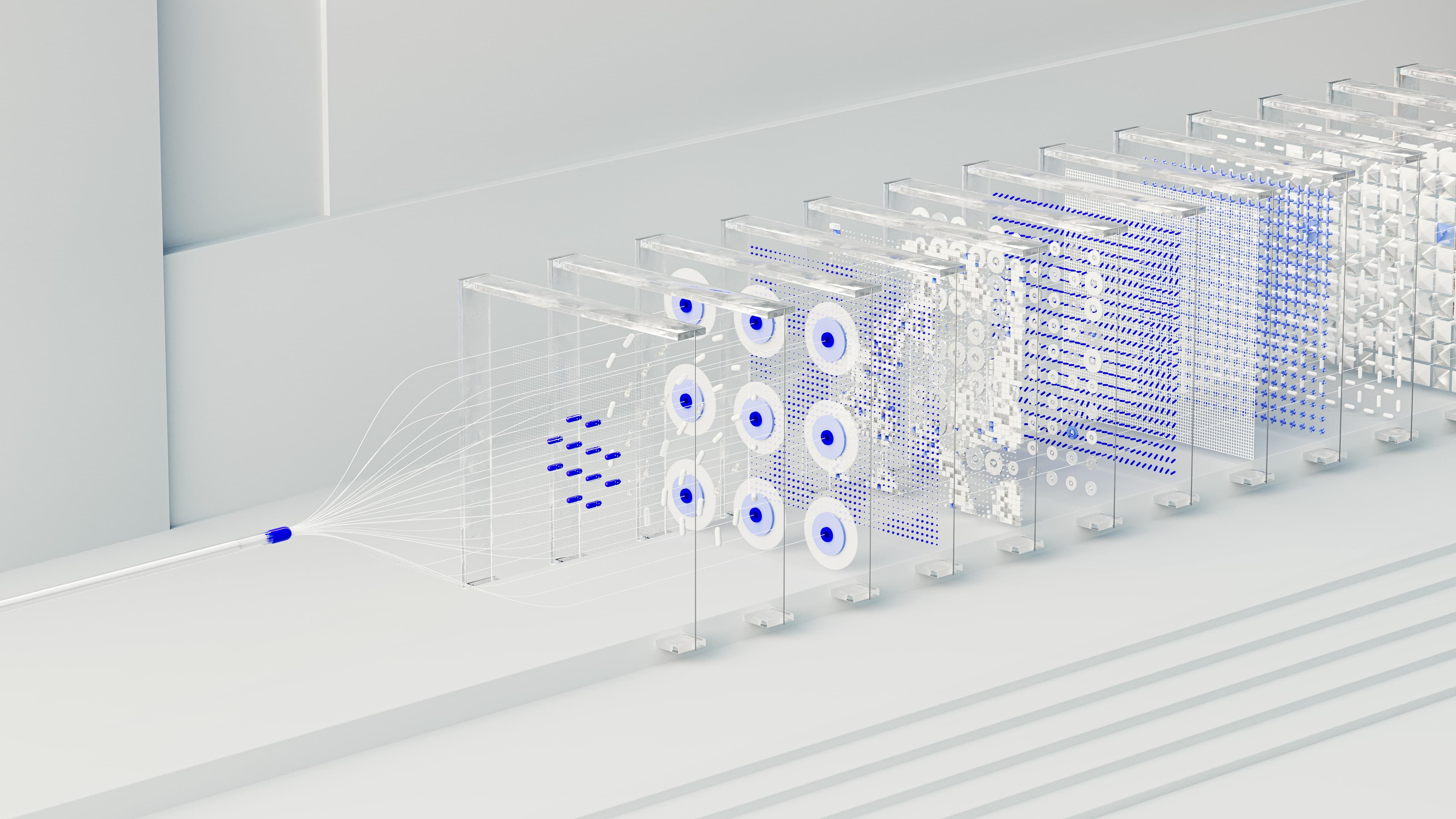

3) AI Builder for router-led multi-agent flows

Not every request should be handled the same way. Some questions should be answered from knowledge, some should trigger workflows, and some should become analytics jobs. The AI Builder is where teams design these behaviors using router + tools + integrations patterns. A router can classify intent (e.g., “policy question” vs. “create ticket” vs. “metrics request”), then dispatch to the right agent/toolchain. This approach keeps systems maintainable: you evolve individual tools and agents without rewriting everything.

4) Analytics bots that turn natural language into SQL (async jobs)

Analytics is where trust is easiest to lose and hardest to regain. Cere Insight’s analytics bots translate natural language questions into SQL over your organization’s data sources and execute them as asynchronous jobs. This matters operationally: analytics queries can be expensive, long-running, or require approvals and guardrails. Running them asynchronously supports better reliability, clearer status updates, and safer operational controls than trying to “answer instantly” at all costs.

5) Human handoff as a first-class operational path

Even the best automation needs a human fallback. Cere Insight supports live agent handoff so users can be routed to a human when confidence is low, policies require review, or a workflow hits an exception. The key is that escalation isn’t a dead end: the agent receives the conversation context, relevant retrieved knowledge, and the status of any running workflow or analytics job—so resolution is fast and consistent.

Practical rollout: patterns, pitfalls, and a checklist

- Start with grounded answers before automation. A reliable knowledge base + RAG layer builds trust fast and reduces support load. If you automate workflows on top of shaky answers, you’ll scale mistakes. Adopt first: knowledge base hygiene, permission boundaries, and “show your sources” response habits.

- Use a router to prevent “one prompt to rule them all.” In the AI Builder, route by intent to specialized agents/tools (knowledge, workflow, analytics, escalation). This keeps behavior predictable and reduces regressions when you add new capabilities.

- Design workflows as products, not scripts. Orchestration should include input validation, status updates, and clear failure paths (including live agent handoff). A common pitfall is building workflows that work in demos but fail in production due to missing fields or ambiguous user requests.

- Treat analytics as asynchronous by default. When bots generate SQL, build user expectations around “job created → running → results ready.” This is better for performance and governance, and it creates a natural place for review controls. Pitfall: letting synchronous “instant answers” pressure you into returning partial or unverified numbers.

- Make governance visible early. Define who can connect data sources, who can publish agent flows, and who can run analytics jobs—then org-level boundaries. Pitfall: retrofitting permissions after teams have already shared flows and connectors across tenants.

Closing: who Cere Insight 2.0 is for

Cere Insight 2.0 is for teams that have outgrown isolated agents and need an AI platform that can answer, act, and analyze—under real operational constraints. If you’re supporting multiple organizations, need strong permissioning, want automation you can trust, and require analytics grounded in your own data, Cere Insight 2.0 brings the pieces together so your AI efforts scale without fragmentation.

If you’re adopting progressively: begin with the knowledge base + RAG layer, then add orchestration for high-volume workflows, introduce router-led agent flows in the AI Builder, and finally expand into analytics bots and live agent operations as governance matures.